Why Your On-Call Is Unsustainable (And How to Fix It)

Table of Contents

A three-person team I worked with had 47 pages in a single week. Most were transient issues that resolved themselves before anyone could investigate. The team was exhausted, resentful, and starting to ignore their pagers entirely — which is the worst possible outcome for an on-call rotation.

They didn’t fix this by hiring more people. They fixed it by deleting alerts.

Within two months, pages dropped to 2-3 per week. The team started sleeping again. The counterintuitive lesson:

One metric predicts whether your on-call will burn out your team: signal-to-noise ratio. Here’s how to measure it, recognize when it’s killing you, and fix it before someone quits.

What Signal-to-Noise Ratio Actually Measures

Signal-to-noise ratio is the percentage of pages that required human action. Calculate it monthly: pages that required someone to do something divided by total pages.

- Greater than 80%: Healthy

- 50-80%: Concerning

- Below 50%: Your alerting is broken

The math is simple but brutal. A three-person team can sustainably handle maybe 3-5 pages per person per week. That’s the ceiling. Waste those pages on false positives and auto-resolving transients, and you’ve got nothing left for real incidents.

Here’s an example from a real team audit:

- 60 total pages this month

- 35 required action

- 20 auto-resolved before anyone could respond

- 5 were false positives

- SNR: 58%—concerning, needs work

That 20 auto-resolved pages is the killer. If an alert fires and resolves before you can acknowledge it, what did it accomplish? It woke someone up, spiked their cortisol, and then… nothing. Keep interrupting people for non-events and they’ll start treating every page as a non-event.

Every alert outcome falls into one of four categories, each with a target:

| Alert Outcome | Target | Action If Exceeded |

|---|---|---|

| Required immediate action | > 70% | Good alert, keep it |

| Auto-resolved before ack | < 15% | Add duration requirement |

| False positive | < 5% | Fix detection logic |

| Could have waited | < 10% | Demote severity |

The weekly review ritual makes this actionable. During every on-call handoff, spend 30 minutes reviewing every page from the past week:

| Question | What "No" Reveals |

|---|---|

| Did this require human action? | Alert is noise — delete or demote it |

| Could it have been prevented? | Missing automation or monitoring gap |

| Could it have waited until morning? | Severity is miscategorized |

| Was the runbook sufficient? | Documentation debt |

| Should this alert exist at all? | Legacy cruft — delete it |

The answers drive improvements. Alert too sensitive? Tune the threshold. Could be automated? Build auto-remediation. Provided no value? Delete it. The goal is for every alert to earn its place in the rotation.

Recognizing Burnout Before It’s Too Late

The insidious thing about on-call burnout is that it accumulates slowly. By the time it’s obvious, someone is already job hunting.

Individual burnout shows up in behavior first. Someone starts acknowledging alerts but not actually investigating them. Response times gradually increase. They snooze alerts instead of addressing them. There’s resentment in handoff meetings — subtle comments about the unfairness of the rotation or the quality of alerts.

Emotional signs follow: dread when an on-call shift approaches, anxiety about phone notifications even when off rotation, the feeling that you can never truly disconnect. Eventually physical symptoms emerge — sleep disruption that persists even off rotation, exhaustion that doesn’t recover between shifts.

At the team level, watch for alerts being suppressed rather than fixed, runbooks not being updated, post-incident reviews getting skipped, or transfer requests. These are all signs that people have given up on improving the system and are just trying to survive it.

The numbers tell a story too:

| # | Metric | Healthy | Concerning | Unsustainable |

|---|---|---|---|---|

| 1 | Pages per shift | < 3 | 3-7 | > 7 |

| 2 | Night pages per week | < 1 | 1-3 | > 3 |

| 3 | Auto-resolve rate | < 20% | 20-40% | > 40% |

If you’re consistently in the “concerning” column, you have time to fix things. If you’re in “unsustainable,” someone is probably already looking for another job. For a three-person team, losing one person to burnout is catastrophic — it’s not just losing a teammate, it’s losing one-third of your on-call capacity.

The night pages metric deserves special attention. Sleep disruption has outsized effects on cognitive function, mood, and long-term health. A single 3 AM page doesn’t just cost the hour it takes to resolve — it costs the next day’s productivity, and if it happens repeatedly, it compounds into chronic exhaustion. Track after-hours pages separately from daytime pages. If someone is getting more than one night page per week on average, that’s the first thing to fix — not through better scheduling, but by eliminating or automating whatever is waking them up.

Night pages are disproportionately costly. One 3 AM page has more impact on a person than three 2 PM pages. Treat after-hours alerts as the highest-priority candidates for elimination or automation.

The Fix: Delete, Tune, or Automate

The good news: burnout caused by bad alerting is fixable at the source. You don’t need to hire more people or implement elaborate rotation schemes. You need fewer, better alerts.

Every alert must earn its place in the rotation. If it doesn’t require immediate human action, it shouldn’t page. If it can be automated, automate it. If it fires regularly without leading to action, delete it.

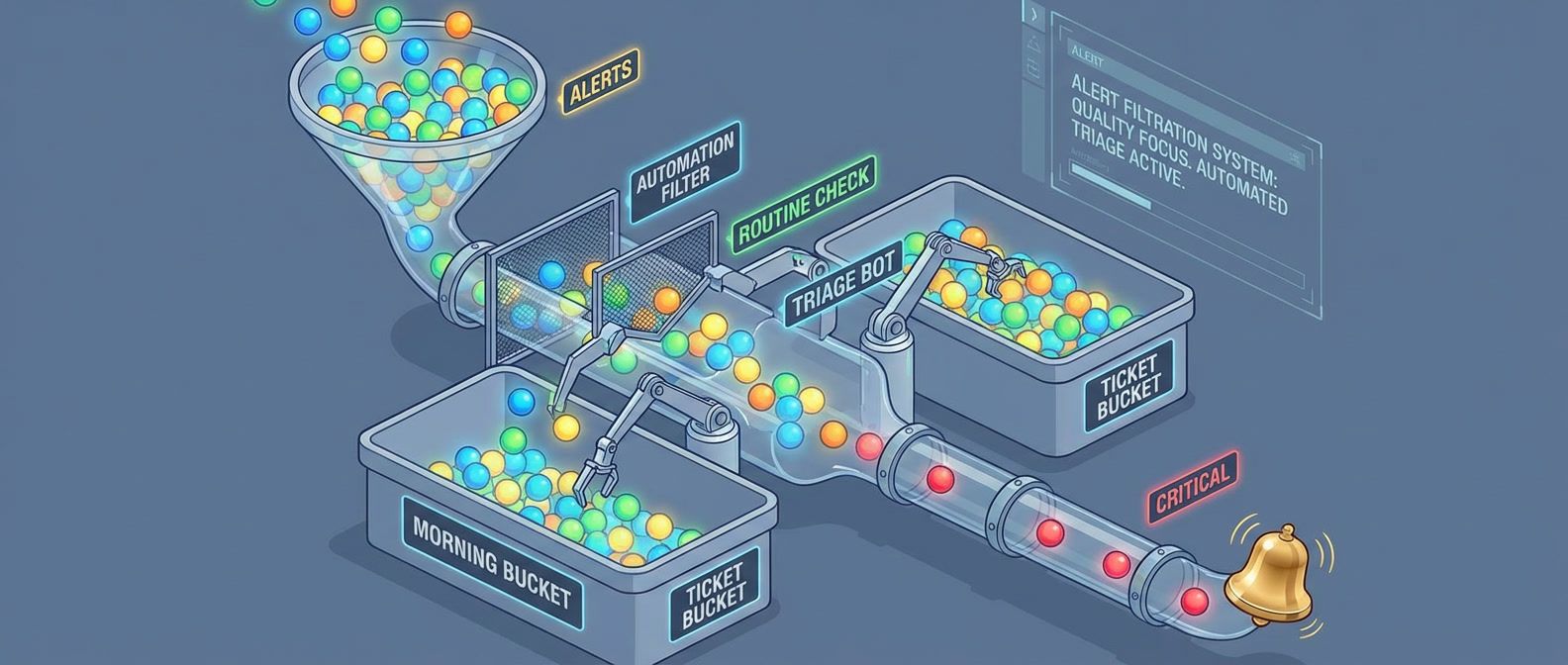

For each problematic alert, you have three options:

- Delete it: If the alert never leads to action. This sounds scary, but an alert that nobody acts on is worse than no alert — it trains your team to ignore pages. If you're nervous, demote it to a Slack notification for a month and see if anyone notices.

- Tune it: If the alert fires too often or at the wrong times. Add a duration requirement so transient spikes don't page (require the condition to persist for 5 minutes instead of firing immediately). Add hysteresis so alerts don't flap (fire at 90%, resolve at 80%). Adjust thresholds based on actual behavior rather than theoretical limits.

- Automate it: If the fix is always the same. If every disk space alert ends with "clear /tmp and rotate logs," that's not a human problem — that's a script. Auto-remediate the common case, only escalate to a human if automation fails.

The biggest win for most teams is after-hours filtering. Not everything needs to wake someone up at 3 AM. Implement time-based routing: P1 (service down) pages anytime, P2 (degraded but functional) pages during business hours only, P3 (needs attention but not urgent) never pages.

This isn’t ignoring problems — it’s acknowledging that “one replica down out of three” at 2 AM doesn’t justify waking someone up when the service is still functional. The on-call person can check in the morning.

The weekly review is the highest-leverage practice for on-call sustainability. Thirty minutes per week of deliberate improvement compounds into dramatically better on-call within a quarter.

If your page budget is consistently exceeded despite these efforts, there’s a nuclear option: stop feature work until alerting is fixed. This sounds dramatic, but reliability debt is real debt. A team that can’t sleep can’t ship features either. Sometimes you need to stop digging before you can climb out.

Start Here

The team I mentioned at the start didn’t need a new rotation schedule or a new incident management platform. They needed fewer, better alerts. The constraint of being a small team forced discipline that larger teams often lack — when you can’t spread the pain across twenty people, you have to actually fix the problems.

On-Call for Small Teams: Surviving With Three

Sustainable rotations, escalation policies, and alert quality for teams too small for 24/7 coverage.

What you'll get:

- Small team rotation templates

- Escalation policy setup guide

- Alert noise reduction checklist

- On-call health metrics dashboard

If you take one thing from this article, make it the weekly review. Thirty minutes during each on-call handoff, systematically examining every page from the past week. That single practice, consistently applied, will transform your on-call within a quarter.

Start this week:

- 1 Calculate your signal-to-noise ratio. If it's below 80%, you have work to do.

- 2 Schedule your first weekly review during the next on-call handoff.

- 3 Pick one high-volume alert and either tune it, automate it, or delete it.

The measure of good on-call isn’t how many incidents you handle — it’s how few incidents require handling. A small team with excellent alert hygiene sleeps better than a large team drowning in noise.

Share this article

Enjoyed the read? Share it with your network.